So you've Nobbled your Git

Git protects you from some sorts of errors, by letting you create a history which you can then roll back through. On the other hand, it gives you some very powerful commands with which an incautious person can wreak havok. In fact, everybody who uses git has probably done something catastrophic at least once. It happens. So what can you do about it?

Luckily, Git gives you some powerful tools for fixing things that it lets you do, and there are some sneaky tricks for things it doesn't deliberately help with, but does by coincidence. For some things, you just have to hope your backups have been keeping up. But how do you tell which is which, and what do you do?

Things Wot I have done to my Repos

- Checked out a file and lost local changes (git checkout <filename>)

- Done `git pull` and got a merge commit I didn't want

- Done `git reset HEAD~<N>` to go back before the merge commit, and gone too far

- Run `git reset --hard` instead of `git reset`

- Checked out a feature branch under the name of master and then pushed it

If you're thinking I might just be a bit of an idiot, well maybe, but not because of these things. They happen. Even the last one, although that was a bit of a perfect storm of errors combining into horror. They happen to everybody, even the experienced. Sites like DangitGit exist for a reason! The trick is to do them less and fix them better.

Why these things are Good but also Bad

In order:

Checked out a file and lost local changes.

`Git checkout` for a single file means "restore this file to the state in the index" which, in simpler terms means "put this file back to what git thinks it is" (in most circumstances). For a file with staged changes, these are kept, i.e. you get the state from the last commit AND any staged changes.

This is handy when you're making prospective changes and want a way to undo parts that didn't work. You stage the bits that did work, and you checkout, and you try again.

I used it a lot recently because I was writing a script to find-replace things in code. I wanted changes to the script to be kept, but it had gone wrong, so I wanted all the code to be put back to how it had been. `git add <script>` and `git checkout ./`and I could easily undo the horrors my script had wrought.

Why you should BEWARE: you are asking git to do something with things it doesn't know about (your unstaged, uncomitted changes). Git happily does this and it doesn't care. Unstaged changes are none of its business and it throws them away. Git can't help you get them back. It never had them.

Done `git pull` and got a merge commit I didn't want

You try to `git push` and are told your branch is behind the remote. The instructions say to do git pull` first. Git pull is nice and simple, and mostly you don't have to do much more. You pull, you push, job done. This is nice when you really did make distinct changes and are happy with a merge commit.

Why you should BEWARE: merge commits are ugly. Sometimes they are a tolerable evil, but they make the history more complicated and can be tricky when you're trying to go back in time. Don't just blindly `git pull`. Sometimes you need to take the extra time and rebase your local changes onto the remote.

Luckily, this is all doing "gitty" things, so git will help you! You can simply go back (git reset) to before the merge, and fix things properly.

Done `git reset HEAD~<n>` to go back before the merge commit, and gone too far

Suppose you accidentally did that blind git pull, and realised you now have an ugly merge commit you don't want. Ah, you think, I can just reset back to before I did that. This is really handy - I can go back to any of the old states and I can branch from it, or I can go back "undoing the commits" but keeping the changes, so I can refactor what went into what commit etc.

Why you should BEWARE: Counting is a real downfall of mine, (how many days ago was Sunday again?), and I got N wrong and went back too far. Now I've "lost my commits". I have the changes, but I don't know which of them went into what commit and I don't want to have to recreate that! If I was even dumbed and did a hard reset, I don't even have the changes anymore, and I really don't want to redo all of those. But, the changes had been committed, so maybe there's a fix?

Again, this was a "gitty" thing, and I can fix it. I didn't delete the commit entirely (yet), I just took it out of local history. At worst, it should still exist on my remote (hopefully I pushed it before I messed up, but in this case, I had not). See below for the solution.

Run `git reset --hard` instead of `git reset`

I've made some changes, I've staged some things, and I realise actually it was all bunk. Maybe it was a failed experiment, and became clear it wasn't going to work only partway. Maybe I was just messing about. Regardless, there are circumstances where I want to go back to a clean slate, and put everything back into the state of the last commit. This is the task of `git reset --hard`. It means "wipe it all clean. Put me back as though I had just cloned this/hadn't done anything since my last commit.

Why you should BEWARE: Well... read again carefully what this does. All your changes, staged or not, trash them. Reset it all!. Now compare to what `git reset` (without --hard) does. That's a bit different, isn't it. Hard resets are very brutal. They have their place, but must be handled with care. Recently I did a hard reset I didn't mean to. I had a few hours of work, staged but not committed, and it had vanished. Bugger.

So can I fix it? Well... kinda. While I did a "gitty" thing, git obeyed me, and threw my work away. I asked it to. However, all is not lost. I can't just ask git to undo my mistake, but some of it might remain, if I can work out how to find it. See below!

Checked out a feature branch under the name of master and then pushed it

Being able to have a local branch with one name map to a remote branch of another name is handy. For instance, say my remote repo like to separate hotfix from feature branches, or use a developers name as part of the branch. I don't need to remember this when I make local branches, and I don't have to fiddle around renaming branches locally. I just push like `git push origin local_name:remote_name` This is handy.

I can also add more than one remote ("origin" is not special, it's just a default name) and push, pull etc from the one I want.

Why you should BEWARE: Mostly these features are handy, but I managed to make a real mess for myself. I added a new remote and tried to checkout a specific branch. I accidentally pulled a branch, and didn't notice that locally it was now called master. Later, I pushed the branch. I forgot to specify that I wanted to push to the remote, and I forgot to type the branch name. Had I remembered the latter, I would at least have seen an error that that name didn't seem to exist. Had I remembered the former, at least I'd only have nobbled the copy on my personal remote. The remote configuration let me push because I had high priviledge. Oops!

Can I fix this? Luckily, yes. I didn't FORCE push, so all I have to do is take a deep breath, checkout back to what should be the tip of master, and forcibly push that. I should be very, very cautious here though. If I get this wrong, I can do a lot of damage. Force pushing is not something to take lightly. If this is not your project, buy the maintainer a stiff drink (or a cookie) and ask them politely to fix it for you.

HELP, What do I do NOW?

So, assume you've done something similar to one of the above. You've "lost" some work that was staged, or committed so that git knew about it. Can it help me? First, some background.

Every Repository is Equal

Introductions to Git often talk about its character as a "distributed" system. Rather than the older style where there was one "canonical" repo, and people could locally have a subordinate copy, in git "all repositories are equal". This is true for most stuff. Commits, history, objects etc are stored in every copy of the repo, and none of them are special.

However, it is not true of absolutely everything. If you have worked with "git tags" you may recall these aren't pushed by default with the rest of the content. You have to push them specially. Also, (obviously), none of the untracked files in your repo are part of anybody else's repo. You can also have git configured on your machine to e.g. use a global .gitignore file.

Local Specialities

There are a few other things which exist in your local git repo, but aren't part of your remotes, or anybody else's copy. This is one of the ways in which git is not a backup - pushing to a remote is about sharing stuff, not preserving it. A git remote is a copy of some of your stuff, but not uncomitted changes, or the local parts of your repo.

For us, here, the things we're interested in are the git "reflog" which is sort of a local history of some of the "gitty" things you've done, and the git "object directory". Git turns everything into these "objects" which is why you see messages like "Counting objects"" when you push or pull. Things that you undo, or never confirm (like staged-but-never-comitted changes) might still exist in the objects database, but in an "orphaned" state - not part of the repo, but not yet gone forever.

Taking out the Trash

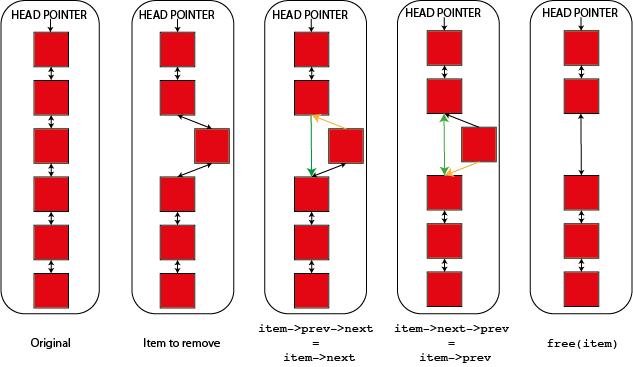

Git is strictly a garbage collected system. "Garbage collected" languages are a class where, when something is freed or deleted, instead of it being immediately wiped, it is merely flagged somehow as "done with". At some point, often on schedule, or when the program isn't doing much else, the garbage collector comes and cleans things up, returning the memory to the program.

If you've watched things like "CSI" or other tech-jargon TV, you have probably seen somebody restoring apparently deleted files on a computer harddrive - this is a bit like garbage collection. When you delete a file from disk in the normal fashion, it would take time to overwrite every byte with a 0-byte, so instead the space is merely flagged as empty. For some time the file may be effectively still there, just "lost" and can be restored. Proper drive wiping involves overwriting (more than once, because magnets are complicated) with 0s. The recycle bin won't cut it.

The reflog and the object database for git are garbage collected. This means that objects created because git thought you were going to do something, even if you didn't, might still be there days, years, commits etc later.

Don't Push your Luck

In most cases, any attempts to use these features to save you are a last ditch, and the better approach is not to make the mistake in the first place. Since the object database is garbage collected, files hiding in it are living on borrowed time, and can be irreparably lost at any time.

So, the Solutions

I am only going to talk about the solutions to two of the messes in the list above, namely the accidental hard reset to an older commit, and the accidental hard reset of staged changes. The things where you might need to do something not entirely "gitty" to fix them. You might notice both things involve resets...

The first "solution" for FUTURE occurences of these problems, is never to run a reset without first stashing everything, or backing it up, or similar, and to be certain the reset is the correct command first. But if you've already messed up, you want to fix it now. There is hope!

Fixing an "undone" commit

The first error, resetting back to HEAD~<N> and going to far, isn't that bad. For a start, if you've been pushing regularly, the work in the "lost" commits isn't gone, as you could always clone afresh. Luckily though, even if you never pushed, you can fix this. Git knew about those changes, and those commits. This can be reconstructed. Note that if you did a hard reset, the changes are apparently "gone" wheras a soft one leaves them there, but your commit details are gone (such as any picking of lines, or separation into multiple commits).

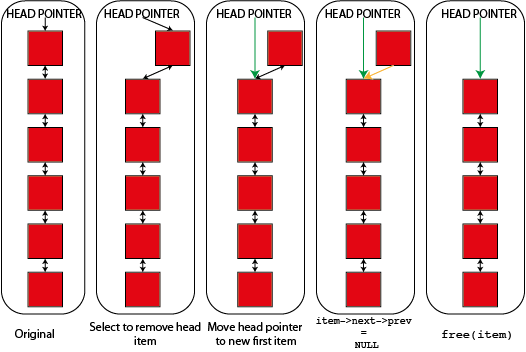

But the gist of the solution is as follows. Whenever I commit, checkout, reset, rebase or pull, the Git reflog stores a "commit" going between the states. These commits are "real" but they aren't part of my core repo. You can see a "history" using the command "git reflog". You should see things like "commit: <commit msg>" and possibly "checkout: moving from <branch> to <branch>".

These states don't persist forever but they are real long enough for this! I can simply backup my work (just in case), take a deep breath, and broach the "reset" command again, but more carefully this time. I carefullyidentify the point I made the error, and I ask git politely but firmly to undo it. In this case, I am looking for the line which says " <id> HEAD@{N}: reset: moving to <blah>" where "blah" might be "HEAD~2" or might be a commit-id or similar. I want to go back to just before this, so I pick the previous line and make a note of the part "HEAD@{N}".

If I have any unstaged changes, or I have anything else I am not sure about I double check that I backed it up! We are about to run another reset. Getting this wrong can make things worse. Backup. Now.

Now, again carefully, I run `git reset --hard HEAD@{N}` where N is the thing I just worked out. Hopefully I am back where I wanted to be! Since the commits I had orphaned are no longer dangling, as they're now part of my branch again, they wont be cleaned up, and I have got them back. I breathe a sigh of relief and vow never to hard-reset again.

Fixing nobbled staged changes

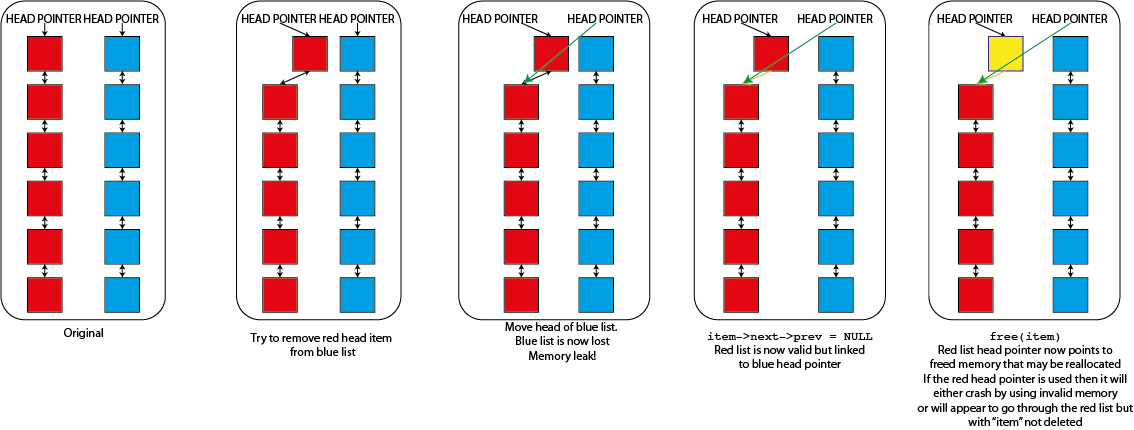

The previous error wasn't actually as bad as all that. All I had done was remove some entire commits from my branch history. It seems logical that git remembers them for a bit and can put them back. But in the case of staged changes, they were never part of a commit at all, so they're not in the reflog in any form.

Luckily, when we stage the changes git "gets things ready" and creates the object detailling the changes in the object database. But there is no longer any reference to them in the repository itself. They are just orphaned objects. If we can convince git to spit them out, at least we have our changes (or files) back, even if we have to do a bit of work to restore things completely.

The following solution comes from this link. I was able to save my situation using the simple method, because I didn't have many changed files, but the link also details a longer solution for really bad mess-ups. Basically, we have to work out which are the orphaned objects (not part of any commit, branch etc), which of these are files (commits etc can also be in here), and then get git to tell us the content. We can then either hand-pick what we want back, or write a script to spit it all into files and use our shell-scripting prowess to restore things.

The direct is using `git fsck --cache --unreachable $(git for-each-ref --format="%(objectname)")` which asks git to spit out any unreachable object ids. We can then show the content with `git show <id>`. If there's not much stuff, that should work.

If we get loads, AND the reset is the last thing we staged or committed, we can list objects in order using `find .git/objects/ -type f -printf '%TY-%Tm-%Td %TT %p\n' | sort`.This gives us ALL the objects, but we can cross reference the lists to find our lost stuff, or just go by hand. We can again use `git show` to view the objects, although in this case we have to strip out the extra '/' characters from the ids.

Luckily for me, I only needed to restore one or two files, so I used the find command and did `git show` manually, but I was glad not to have to redo all my work!

HELP, this Keeps Happening to Me!

If you keep ending up with these sorts of problems, there are a few handy tips.

- DO NOT PANIC. It is never helpful.

- STOP. Once you've messed things up, you're already thinking "If only I hadn't done that!" You might notice that a lot of the "solutions" to git problems can make things much worse. Once you're in a mess, stop. Backup what's left of your work. Make a new repository somewhere else to test fixes. Don't just plow on!

- Work out what you actually did wrong. The internet abounds with solutions to git problems, but unless you can detail exactly what went wrong, you wont find the right one.

- Backup and stash carefully in future. I said that git only "knows" about staged or committed files - it also knows about those you tell it about using the "stash" command. Carefully stashing changes before doing "risky" things can save you.

- Slow down, think hard, and never commit on a Friday afternoon. Git is a powerful tool, and should not be operated while tired, distracted, or under the influence. Don't try and do complicated gitty things late or short of time.

Hopefully, this helps with the worst muck-ups you can do, git wise. But remember, nothing beats not doing it in the first place!

Heather Ratcliffe

Heather Ratcliffe

Please wait - comments are loading

Please wait - comments are loading

Loading…

Loading…